Govern AI Everywhere.

We are setting the Standard for Trusted AI

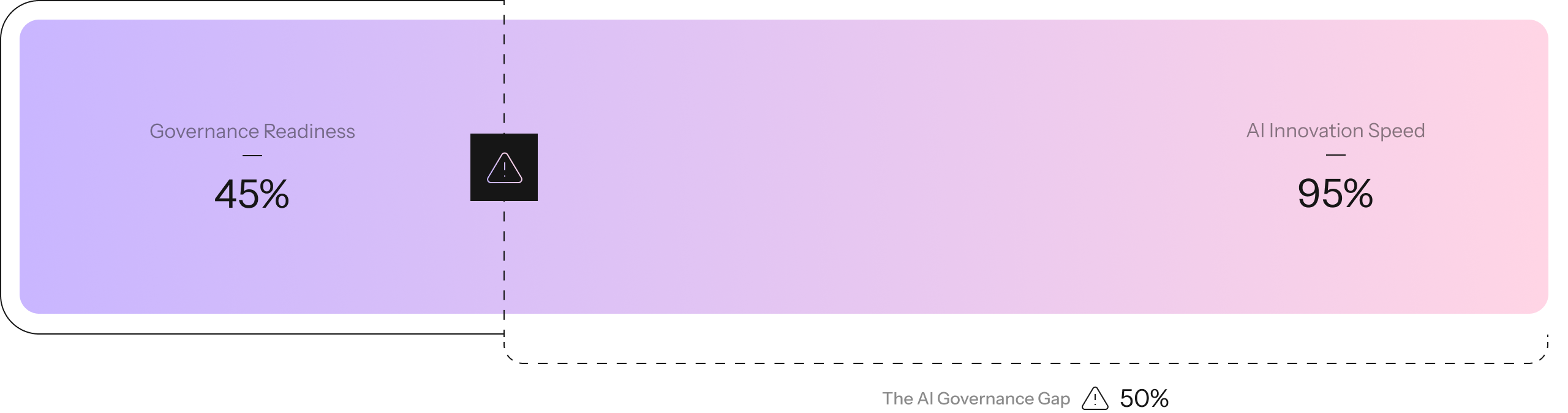

AI Is Moving Faster Than Governance Can Keep Up

Enterprises are deploying AI agents, models, and applications at unprecedented speed. But governance, risk, and compliance processes are still manual, fragmented, and stuck in spreadsheets.

AI beyond pilots

The Most Comprehensive, Continuous & Contextual AI Governance

Enterprise AI governance for agents, models, and applications — with pre-built policy packs for every major regulatory framework. Integrates with the systems you already use.

Best-in-Class Technology.

Most Credible Brand.

One platform to govern every AI system — from pilot to production — with native integrations across your existing stack.

AI Registry

Discover and catalog every AI system — agents, models, and applications — across your enterprise. Full visibility into shadow AI.

- Shadow AI detection

- Risk classification

- Stakeholder mapping

- Auto-discovery

Risk Intelligence

Continuous, contextual risk assessment for bias, security, privacy, and compliance — not point-in-time snapshots.

- Continuous monitoring

- Automated red-teaming

- Real-time alerts

- Drift detection

Policy Engine

Enforce governance policies with automated workflows, pre-built policy packs, and audit-ready documentation.

- Pre-built policy packs

- Custom guardrails

- Compliance mapping

- Evidence generation

GAIA

Comprehensive governance across every layer of your AI agent ecosystem — from pre-deployment testing to runtime action enforcement.

What Makes Us Different

We didn't bolt Al features onto a GRC tool. We built the platform for MeasurableTrust from scratch - and it shows.

Only Platform with Multi-Layer Governance

Model-level, agent-level, and application-level governance in one unified platform. No other vendor covers all three. We see the full picture — from individual model risks to emergent behaviors across agent networks.

Others focus on a single layer — leaving dangerous blind spots.

Continuous, Not Point-in-Time

Real-time monitoring and continuous risk assessment that evolves with your AI systems. Not quarterly audits or static checklists — always-on governance that catches risks as they emerge.

Traditional GRC tools offer snapshot compliance — already outdated by deployment.

Purpose-Built AI Intelligence

Our governance engine is built on proprietary research and trained on thousands of AI risk scenarios. It understands AI-specific risks like hallucination, drift, and emergent agent behavior — not just generic software risks.

Generic tools bolt on AI features. We were built from day one for AI.

Pre-Built Regulatory Coverage

Ready-to-deploy policy packs for EU AI Act, NIST AI RMF, ISO 42001, SOC 2, and HITRUST — with automated evidence generation and audit-ready documentation that saves teams months of work.

Others require manual policy mapping. We deliver compliance out of the box.

We Set the Standard for Trusted AI

Credo AI is the enterprise platform for AI governance, risk, and compliance — purpose-built so enterprises can trust their AI — and prove it.

Purpose-Built for AI Governance

Founded in 2020 with a singular mission: make AI systems trustworthy at enterprise scale. Not a generic GRC tool adding AI features — comprehensive, continuous, and contextual AI governance is all we do.

Trusted by the Fortune 500

From financial services to healthcare, the world's most regulated enterprises trust Credo AI to manage AI risk for agents, models, and applications — while accelerating innovation with Measurable Trust.

Setting the Standard, Thoughtfully

We don't just follow regulations — we help write them. Credo AI actively contributes to the EU AI Act, NIST AI RMF, and ISO 42001 frameworks alongside policymakers worldwide.

Best Technology, Most Credible Brand

Forrester Wave Leader. Gartner recognized. World Economic Forum Technology Pioneer. Our proprietary governance engine is built on peer-reviewed research no other platform can match.

Recognized for Our Pioneering Work in AI Governance

One platform to govern every AI system — from pilot to production — with native integrations across your existing stack.

Ready to Lead in Trusted AI?

We set the standard for trusted AI — thoughtfully. Join the enterprises achieving Measurable Trust across agents, models, and applications.